Apple Vision vs MediaPipe: Which is Better for Yoga Pose Detection?

A technical comparison from building Eight Angle, an iOS yoga app.

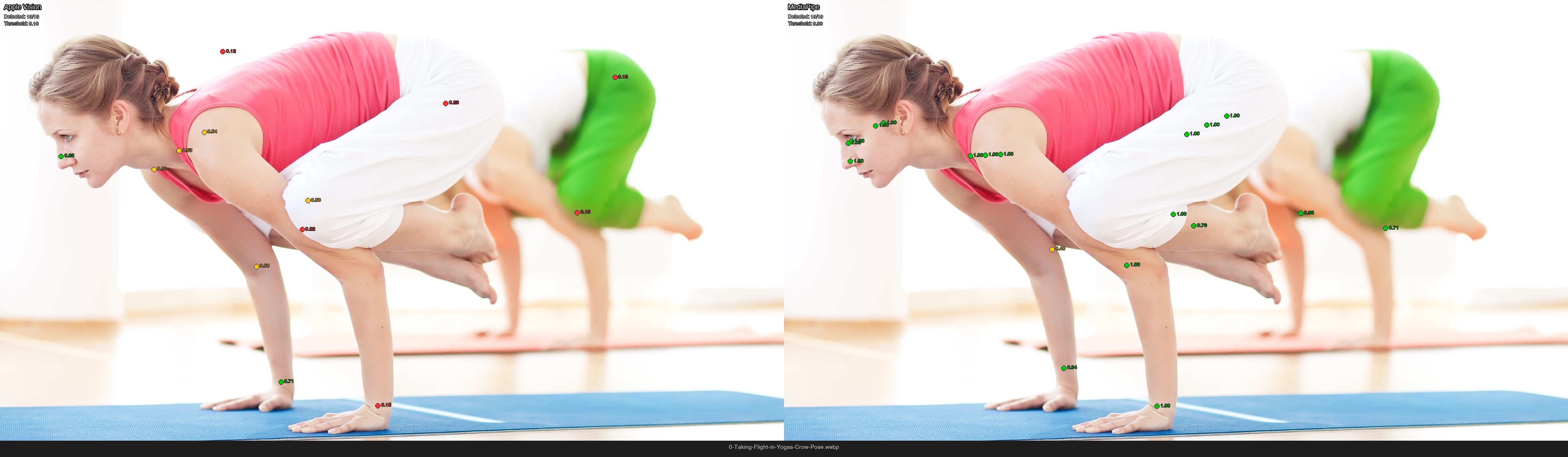

Apple Vision (left) failed to detect this Downward Dog pose. MediaPipe (right) found all 19 joints. Green = high confidence, yellow = medium, red = low.

Apple Vision (left) failed to detect this Downward Dog pose. MediaPipe (right) found all 19 joints. Green = high confidence, yellow = medium, red = low.

When building Eight Angle, we needed reliable pose detection to identify yoga poses in real-time. We started with Apple’s built-in Vision framework, but soon noticed problems: the detector wasn’t finding poses in many of our training images, especially when bodies were inverted or partially occluded. So we tested Google’s MediaPipe as an alternative.

The result? MediaPipe detected 26% more poses and achieved 4% higher classification accuracy. Here’s what we found.

Background: How Pose Detection Works

Pose detection is the computer vision task of finding a person’s body position in an image. The detector outputs a “skeleton” — a set of joint points (ankles, knees, hips, shoulders, wrists, etc.) with X/Y coordinates and confidence scores.

Apple Vision outputs 19 joints. MediaPipe actually provides 33 landmarks, but we use the 19 that map to Vision’s joints to keep the comparison fair. For each joint, you get:

- Position: X and Y coordinates (normalized 0-1)

- Confidence: How certain the detector is (0-1)

Yoga presents unique challenges for pose detectors:

- Inversions: Headstands and handstands flip the expected body orientation

- Occlusions: Arms behind the body, legs crossed over each other

- Unusual shapes: Poses like Wheel or Eight Angle don’t look like typical standing poses

These edge cases matter because a yoga app needs to work across the full range of poses, not just standing positions.

The Comparison Setup

We evaluated both detectors using the same conditions:

- 1,603 training images across 21 yoga pose classes

- Same feature extraction: 31 geometric features (joint angles, distances, symmetry measures)

- Same classifier: Random Forest with 5-fold cross-validation

- Same hardware: All processing on Apple Silicon

The feature pipeline transforms raw joint coordinates into meaningful measurements like “hip angle” or “knee symmetry” — this matters more for classification than raw positions.

Key Finding #1: Detection Rate

The biggest difference was how many poses each detector could find.

| Detector | Detected | Total | Detection Rate |

|---|---|---|---|

| Apple Vision | 1,142 | 1,603 | 71.2% |

| MediaPipe | 1,562 | 1,603 | 97.4% |

MediaPipe detected 420 more poses — a 36.8% increase in usable training data from the same image set.

The gap was largest for challenging poses:

| Pose | Vision Samples | MediaPipe Samples | Gained |

|---|---|---|---|

| forward_fold | 51 | 123 | +72 |

| crow | 47 | 117 | +70 |

| downward_dog | 63 | 130 | +67 |

| bridge | 26 | 91 | +65 |

| handstand | 37 | 91 | +54 |

Vision struggled most with poses where the body is folded or inverted. Forward Fold, for example, has the head below the hips with legs potentially occluding the torso — Vision detected fewer than half of these images.

Crow pose: Vision (left) found only 10 joints, mostly with low confidence. MediaPipe (right) detected all 19 joints clearly.

Crow pose: Vision (left) found only 10 joints, mostly with low confidence. MediaPipe (right) detected all 19 joints clearly.

Key Finding #2: Classification Accuracy

More detected poses also meant better classification:

| Detector | Accuracy | Std Dev |

|---|---|---|

| Apple Vision | 89.6% | ±1.8% |

| MediaPipe | 93.4% | ±1.2% |

MediaPipe’s accuracy was both higher and more consistent (lower standard deviation).

Per-Class Winners

The biggest improvements came from poses that Vision had trouble detecting:

| Pose | Vision | MediaPipe | Change |

|---|---|---|---|

| low_lunge | 66.7% | 92.1% | +25.4% |

| splits | 66.7% | 80.0% | +13.3% |

| forward_fold | 82.4% | 95.1% | +12.8% |

| eight_angle | 55.9% | 68.1% | +12.2% |

| downward_dog | 90.5% | 100.0% | +9.5% |

Low Lunge improved dramatically because MediaPipe could detect the back leg even when it’s behind the front leg. With Vision, those samples had missing joint data that hurt classification.

The Headstand Mystery

Headstand: Both detectors found the pose, but notice MediaPipe’s higher confidence scores (green) vs Vision’s lower confidence (yellow/red).

Headstand: Both detectors found the pose, but notice MediaPipe’s higher confidence scores (green) vs Vision’s lower confidence (yellow/red).

One pose bucked the trend: Headstand went from 100% accuracy with Vision down to 88% with MediaPipe.

This seemed counterintuitive — why would better detection lead to worse classification?

The root cause: with only 41 training samples, the class was vulnerable to noise. When we investigated the 5 misclassified images, we found two patterns:

- Straight-arm variants (3 images): Classified as Handstand because the arms were extended, not bent

- Prep poses with tucked knees (2 images): Classified as Crow because the body position was similar

These weren’t detector errors — they were training data edge cases. MediaPipe detected more variation in the training set, exposing poses that were borderline between classes.

The lesson: more data exposes more edge cases. Vision’s lower detection rate masked these ambiguous samples by simply not including them.

Why the Difference?

MediaPipe’s advantage likely comes from several factors:

Better Occlusion Handling

MediaPipe had dramatically fewer missing joints. For example, knee_symmetry (which requires both knees) was missing in 17% of Vision samples but only 3.5% of MediaPipe samples.

Lower Effective Threshold

We found that MediaPipe’s joint positions are accurate even at low confidence scores. Setting the threshold to 0.001 (essentially accepting all detections) gave the best results:

| Threshold | Accuracy |

|---|---|

| 0.10 | 92.6% |

| 0.05 | 92.4% |

| 0.001 | 93.4% |

This is different from Vision, where low-confidence joints are often inaccurate. MediaPipe seems to output confidence scores more conservatively.

Training Data

MediaPipe was trained on a larger, more diverse pose dataset. Google’s research papers mention handling for occlusion, unusual orientations, and partial visibility — exactly the scenarios that matter for yoga.

What We Chose

For Eight Angle, we switched our training pipeline to MediaPipe. The deciding factors:

- Detection rate mattered most. With limited training images, getting 420 more usable samples was significant. More data means better generalization.

- Yoga poses are inherently challenging. Inversions, arm balances, and deep folds are core to the practice — we couldn’t ignore 30% of those poses.

- The accuracy gain was a bonus. We expected more data to help, but the 4% accuracy improvement confirmed MediaPipe’s joint positions are genuinely more reliable for our use case.

Vision isn’t a bad choice for simpler applications — if you’re just detecting someone standing or walking, it works fine. But for yoga, where the whole point is putting your body in unusual positions, MediaPipe handles the edge cases that matter.

Interested in seeing how Eight Angle helps you level up your yoga practice? Join the waitlist to get early access.

Summary Statistics

| Metric | Apple Vision | MediaPipe | Winner |

|---|---|---|---|

| Detection Rate | 71.2% | 97.4% | MediaPipe (+26%) |

| Classification Accuracy | 89.6% | 93.4% | MediaPipe (+4%) |

| Accuracy Std Dev | ±1.8% | ±1.2% | MediaPipe (more consistent) |

| Usable Samples | 1,142 | 1,562 | MediaPipe (+420) |